Who out of the more advanced coders would like to take a shot at “Mode7” on Pokitto?

I know my skills aren’t anywhere near there, but I’m sure a couple of you here are completely capable of it

According to wikipedia it’s a simple 2D matrix operation that reduces to:

xd = x - x0

yd = y - y0

xr = (a * xd + b * yd) + x0

yr = (c * xd + d * yd) + y0

Where x and y are the input coordinates and xr and yr are the output coordinates, but x0 and y0 are the ‘origin’, which aparently isn’t always zero so that bit is a bit muggy.

I’ve got a feeling it’s actually a 3x2 matrix and Wikipedia is being irritatingly vague or odd, since the addition of x0 and y0 at the end certainly coincides with what would typically be a translation.

In which case x and y would be absolute coordinates and the subtraction of x0 and y0 would represent a transformation from absolute coordinates to relative coordinates, so that’s certainly a plausible explanation.

Interestingly it uses fixed points in (from what I gather) a signed Q7x8 format.

Which is rather fortunate considering what my most populat github repo is.

Essentially I think I could do it but I haven’t written a screen mode before so I’d have to take time to learn how to do that first, as well as getting FixedPoints running on Pokitto, which I always intended to do but haven’t got round to yet because I wanted to refine it first or make Pokitto use the general C++ version.

(Maybe I ought to make a Pokitto specific version?)

Essentially the matrix-based affine transformation is the easy bit.

Out of interest, does anybody understand a word I’m saying?

I’m starting to scare myself with all this maths.

Well, as a beginner i can say i understand the first bit as in that it is simply a math formula.

After that there is to much insider terms for me to follow.

I do make CAD drawings for my work, and from that i do get the logic of rotating and shifting a plane / big sprite.

But still, a Matrix based affine transformation ??? only vageu idea what you mean…

I recognise a lot of the words and understand that they make sentences…

I understand the part about the affine transformation  . However I think that your feeling about it being a 3x2 matrix might be wrong. You are starting from a regular 2D image and it is transformed into another 2D image. The matrix is different for every scanline I think.

. However I think that your feeling about it being a 3x2 matrix might be wrong. You are starting from a regular 2D image and it is transformed into another 2D image. The matrix is different for every scanline I think.

Yes, it’s a transliteration of Wikipedia’s formula, getting rid of the matrix notation in favour of simpler notation that demystifies the matrix operation.

To be honest I have no idea what makes a transformation ‘affine’, but it’s the right term and it sounds smart :P

Matrices and vectors are to do with geometry.

A vector represents either a point in space or a direction of travel, and in either case it’s basically a set of coordinates.

A matrix is a grid of numbers that represents a linear transformation consisting of scaling, rotation and translation (among other possibilities). The transformation is applied by ‘matrix multiplication’ which involves multiplying the components of a matrix with the components of a vector in the correct order.

Ironically they’re the reason your CAD drawings can be rendered onto the screen.

GPUs do pretty much all 3D model manipulation with vectors and matrices.

(Which is one of the main reasons I learnt about them in the first place - 3D rendering.)

Why so?

From what I gather, the matrix would look like this:

[sx, ky, tx]

[kx, sy, ty]

Where:

sx = x scale

ky = y skew

tx = x translation

kx = x skew

sy = y scale

ty = y translation

What I gather from Wikipedia’s formula (if I’m reading the explanation right) is that the x - x0 part translates the absolute coordinate x into a coordinate relative to x0 (the origin). The matrix multiplication then performs the rotation and scaling before applying the translation, which inverts the original translation, making the coordinate absolute again.

So basically I’m not saying the 3x2 matrix is equivalent to the formula, I’m saying it’s equivalent to the formula if the prior step of doing a translation to get the coordinates in line with the origin is done first.

(Though there is a way to include that in the matrix using matrix multiplication.)

I’m pretty sure it would be the same matrix, that’s how it works in modern day GPUs, to which Mode 7 was a precursor.

Additional resources:

- For those who don’t understand matrices, this place is a gold mine.

- Found a neat SE Answer that gives much of the maths in a simple way.

- Tonc is very descriptive but sadly very dry too. From what I can gather it is basically a regular perspective projection, the special bit is just that it uses fixed point arithmetic.

- As a bonus, Jorge Rodriguez’s MFGD series - the early matrix bits.

I was thinking the matrix had to change every scanline because affine transformations preserve parallel lines. Now in the pseudo 3D effect achieved with mode 7, vertical parallel lines in the original image are obviously no longer parallel after the transformation

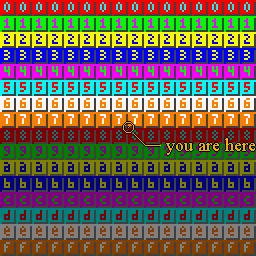

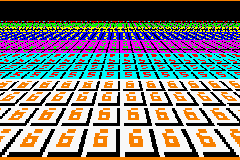

original:

after transformation:

very interesting topic btw. @Pharap I will read those links you posted

I would assume that mode7 would have to be a tiled mode also, mostly because resizing an image down, with would have to be way larger than the screen and we don’t game a lot of RAM to play with.

Several sources suggest projection isn’t affine so I’m assuming the sources suggesting that mode 7 is affine are mislabelling without realising.

Whether it’s affine or not, the maths holds strong so I’m reasonably certain there’s an equivalent 3x2 matrix.

The original mode 7’s background is in fact tiled, so that seems reasonable.

Some thoughs:

- The screen pulls the data from the source, the source doesn’t push data to the screen.

- The transformation only needs to be calculated once per frame, though it still has to be applied once per pixel, which might be slow.

So it might have to be 110x88 as well as tiled?

Yes, the mode7 wiki says they got this pseudo 3d effect by changing the matrix in each scanline. In a simple case just changing the scaling in each scaline would get that perspective image you have.

This is also how the road is drawn in the Outrun type of games. Just scale the road tile scanline to smaller one if its position on screen is higher (i.e. smaller y coodinate), Supposing the scanlines are horizontal unlike the Pokitto screen buffer.

Whereabouts?

Changing it each scanline is a bit of a spanner in the works.

Maybe I’m too used to the typical perspective projections.

That’s an even bigger spanner.

At the end of the “Function” chapter:

However, many games create additional effects by setting a different transformation matrix for each scanline. In this way, pseudo-perspective, curved surface, and distortion effects can be achieved

One way to approach this kind of functionality is to start with the @spinal’s bitmap rotator, add tiling support, add zoom functionality (zoom-rotator), and start to experiment what happens if the scaling factor changes in each scanline…

I am correcting myself  Actually Pokitto’s screen buffer has horizontal scanlines, but they are drawn to the screen device as vertical strips.

Actually Pokitto’s screen buffer has horizontal scanlines, but they are drawn to the screen device as vertical strips.

It depends which effects those are.

If the goal is to entirely replicate mode 7 then we would have to replicate the ‘one matrix per scanline’ thing, but if we’re happy with the effects just a single matrix can give then we could just make a trimmed down version (and maybe call it mode 6 if people aren’t happy calling it mode 7).

I suspect that doing a many npot multiplications per pixel, as matrix would require, would be too much for Pokitto CPU, but I am happy to be proven wrong

Two words: lookup tables

You only need to know the UV vector lengths per scanline. Then step through the ground bitmap using those vectors. Length of U> + V> is same on each horizontal pixel on scanline, because U and V are something divided by Z distance from viewer which is constant per each scanline

If we do it with fixed point calculations like the original mode 7 then it probably won’t be quite as bad as using floating point numbers (and will look closer to the original as well since it will have the same rounding errors).

It depends how fast integer multiplication is on the Pokitto.

The scanlines going in a different direction is still an issue.

It means that to match the behaviour of the original we’d need multiple matrices per Pokitto scanline.

Plausible, but it depends on how many entries would be needed.

15840 bytes at 16-bit resolution.

Edit : I mean, ofcourse, 16-bit fixed point values