What I mean by 2D is 2D screen and 3D space, as opposed to 1D raycasting in 2D space (which is what I’ve done with my library). I.e. you render a 3D image by casting a ray for every pixel (not just column) on the screen. If you then add secondary and shadow rays, it becomes ray tracing, which you can try too, but the first step is to do ray casting (without resursively cast rays).

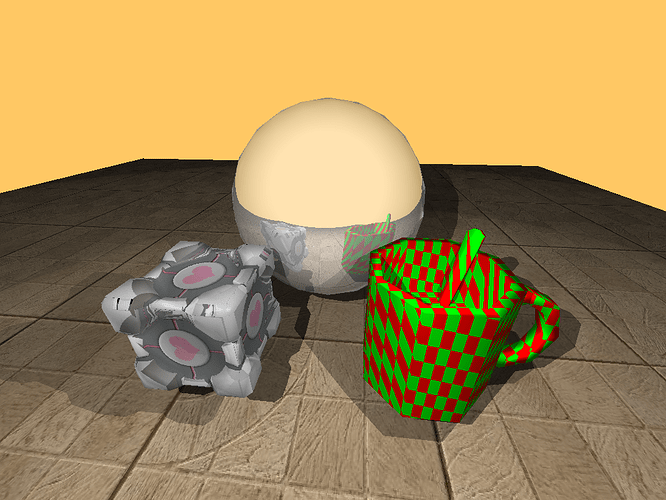

The basic implementation is actually pretty simple (find the intersection of line with given object by solving an equation, very simple e.g. for spheres), but will become more interesting if you don’t use floats and will want to optimize it – if you have static (non animated) environment and a lot of objects, you can use accelerating structures such as quad trees for culling and fast intersections, but I wouldn’t do this. I’d only try to render a few spheres and planes, maybe cylinders and cubes, … Bounding spheres for non-spherical objects are a simple technique that could be tried.

If this is too slow for realtime, you can use tricks, e.g. first quickly render a small resolution (subsampled) frame and then if camera didn’t move keep casting additional rays and improving resolution. Actually I think – if you don’t use framebuffer – there might be a more elegant way:

Don’t cast rays strictly from top left to bottom right by single pixels, but keep jumping by some offset – e.g. you first draw the top left pixel of the screen, then jump 4 pixels to the right and so on… when you get to the end of the screen, go to the second pixel from top left and repeat this. So the order of pixels could be e.g.:

035

714

682

Shading is the next step – again, it’s not difficult but performance is an issue. Start with flat shading and distance fog (cheap), then try Goraud, then Phong etc. For simplicity I’d only have one global directional light. You can continue with textures (procedural ones are especially useful here – a simple checkerboard can be as cheap as sampling a bitmap texture – actually it will be cheaper because for a 2D texture you’ll need to do extra computation to get UV coords from 3D space coords of the intersection). The advantage of raycasting(tracing) is that you don’t need to do texture coordinate correction, it is correct by default.

Doom and Duke Nukem engines aren’t raycasting, they’re BSP renderers (Doom uses sectors too) – the Build engine is just more advanced and uses additional tricks. No one has done that on Pokitto so far – you can add it to your list (it’s on mine as well). The main reason I haven’t started it is that you need to create extra tools for creating and compiling maps – I preferred raycasting because you can edit the maps in the source code and don’t have to compile them.

Maybe the right solution is “tiled rendering” ???

Maybe the right solution is “tiled rendering” ??? That would be really interesting to see (I’ve only seen very slow unoptimized floating point port so far). I think using only a few simple shapes like spheres and planes could allow even interactive framerates.

That would be really interesting to see (I’ve only seen very slow unoptimized floating point port so far). I think using only a few simple shapes like spheres and planes could allow even interactive framerates.

Even offline renderer is useful, you could e.g. render environments for games like Myst at runtime, without having to store the images anywhere.

Even offline renderer is useful, you could e.g. render environments for games like Myst at runtime, without having to store the images anywhere.

):

):