I used to be quite good at using Blender,

but it’s been quite a few years now since I last used it for any extended length of time.

Try the new 2.8, it’s been hugely improved (though it’s still in beta). The objection to Blender’s unnecessarily complex GUI is no longer valid, they’ve completely redesigned it. Also Eevee changed my life, most rendering I do now literally takes a thousandth of the time it used to take with Cycles, while the quality stays comparable.

I’ll put it on my ever growing todo list,

but it will probably be a while before I have cause to.

It didn’t really bother me that much to be honest.

I got used to the UI. I could never remember the shortcut keys though.

I tended to be very precise with my placements though.

I liked using exact numbers and/or rounding up so that the models were more regular/precise and I could do the maths in my head.

Also in one way that’s a bad thing because it means tutorials using the old UI layout will be harder to follow.

Has Nintendo been sponsoring them? :P

When I started out Cycles was still in beta and we were using the old engine.

(I was disappointed that they discontinued that. The results weren’t as realistic but it was easier to use. Especially lighting. And results were less grainy.)

That also didn’t bother me too much.

When I was doing the actual modelling I’d turn the settings as low as possible,

then when it was time to render the final thing I’d

Although I will admit there was one assignment that I had to do for college where the time constraints were so pressing that I ended up resorting to doing ‘headless’ rendering.

Later I discovered that the main reason it took so long is because one of the features I was using had only been implemented on the CPU and not on the GPU.

The really annoying thing is that mere weeks later they implemented GPU rendering for it.

If they’d done that just a few weeks sooner then I would have had enough time to extend the length of my animation by at least 5 seconds and it might have bumped my grade up.

Sounds like a pretty ancient version, I hope you’ll be pleasantly surprised how far Blender has gotten since  It’s one of the most active open-source projects thanks to the sponsorships (not sure if Nintendo is one of them

It’s one of the most active open-source projects thanks to the sponsorships (not sure if Nintendo is one of them  )

)

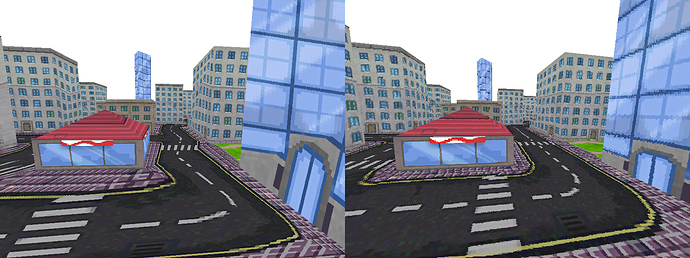

The issue at hand now is perspective correction – you don’t really need it when rendering a model that doesn’t span much depth, but specifically in game levels it shows extremely (left without PC, right with PC):

Per-pixel PC can’t be even considered for Pokitto, it’s expensive as hell, requires multiple divisions per-pixel. What you can do is what PS1 did, since it didn’t have HW accelerated PC either – they simply subdivide the models into more triangles which reduces the distortion.

Now I can subdivide the model in Blender, but that’s not ideal, it creates so much more data to store, so many more triangles to render and complex models to work with. The best thing would be to do a run-time adaptive subdivision only of the triangles that will actually get drawn in given frame and that are beyond some size threshold. This shouldn’t be that expensive because it will apply only to a few triangles in the frame, but the problem is the subdivided triangles are non-standard triangles – I have a triangle drawing function that has a hard-coded behavior to compute barycentric coordinates (needed for texturing etc.) each going from 0 to 512, with their sum always equal to 512 – this hard-coding makes the function fast. The subtriangles would have different barycentric coordinates, they’d have to map the normal coordinates to some subspace for each pixel, and that could be almost as expensive as PC. So I don’t know, will have to find some way around this. Ideas are welcome

What about multiple LOD level of details?

Load high polygon model when needs.

I’ve used it since then, that’s just where it was when I first started using it.

The version I have installed currently is 2.77a.

Wouldn’t you be able to reduce the number of divisions by multiplying by the inverse?

Use the blue thing, use calipers whilst swallowing a soul gem. :P

More seriously (you’ve probably already read the Wikipedia article so you probably already know this anyway), you could just correct every Nth pixel and interpolate the results.

A different approach was taken for Quake, which would calculate perspective correct coordinates only once every 16 pixels of a scanline and linearly interpolate between them

Or approximate the correction with a faster equation.

Another technique was approximating the perspective with a faster calculation, such as a polynomial. Still another technique uses 1/z value of the last two drawn pixels to linearly extrapolate the next value. The division is then done starting from those values so that only a small remainder has to be divided[14] but the amount of bookkeeping makes this method too slow on most systems.

Nice idea as well. It still requires extra setup, but I may use this if I fail to implement some approximation. I have actually found an article about this where they show an optimization – instead of per-pixel you compute it ever nth pixel and linearly interpolate between that. Am trying to implement it now, seems like it’s a big speedup.

http://www.lysator.liu.se/~mikaelk/doc/perspectivetexture/

Good point as well, thank you. I actually went and checked if I could do it, but the problem is I don’t have that inverse and can’t obtain it in a cheap way. I am actually doing the division exactly in order to obtain that inverse – the value I have is an inverse itself, and it has to be so because this inverse (unlike the original value) is linear in the screen space so I can linearly interpolate it. I guess I am not good at explaining it, but it’s in that article I’ve linked.

Actually not the one you’re talking about, this seems very useful! Gotta read that all carefully.

Sounds like a good idea… on the PS1, since that has a matrix multiplication coprocessor. >_<

Welp. :P

z = 1 / (1/z)

That’s confusing. Isn’t that redundant?

(1 / 5 -> 0.2, 1 / 0.2 -> 5)

I couldn’t make much sense of the article.

I tried to read the code but that didn’t make much sense either.

All the variables have terrible names and the variables are very poorly used (being defined before they’re used instead of when they’re used, and constantly reassigning to the same variables instead of declaring new ones when appropriate).

drawtpolyperspsubtriseg and drawtpolyperspsubtri are some of the worst function names I’ve ever encountered.

Might have another read tomorrow when I’m more awake.

That and everything below it in that section.

Matrices and vertices. Presumably it had SIMD instructions.

90,000 polygons per second with texture mapping, lighting and Gouraud shading is pretty impressive for the time.

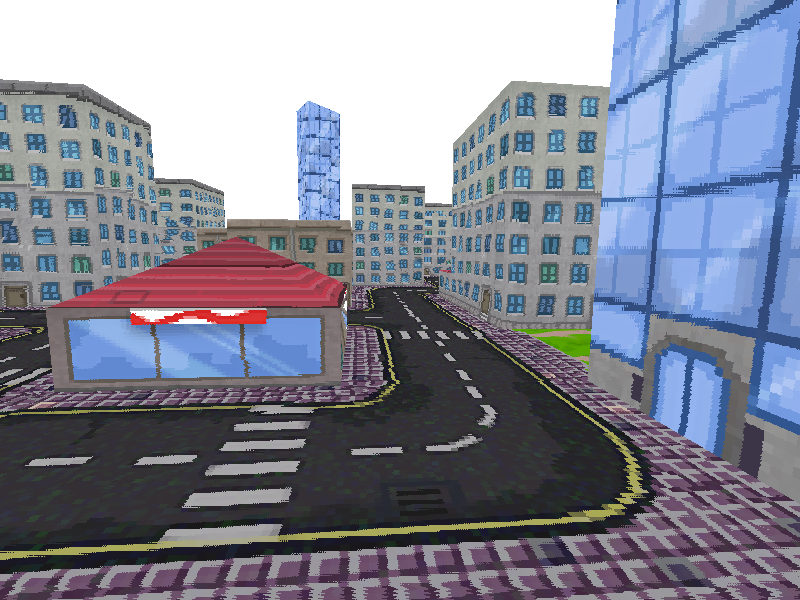

Quake-style approximation by 32 pixels:

Runs at 67 FPS vs 70 FPS without any PC at all, full PC runs at 50, so this is a success I guess.

Yep, actually what @Pharap linked is pretty useful, it says world-space subdivision was used on PS basically for the reason you say, but

Software renderers generally preferred screen subdivision because it has less overhead. Additionally, they try to do linear interpolation along a line of pixels to simplify the set-up (compared to 2d affine interpolation) and thus again the overhead (also affine texture-mapping does not fit into the low number of registers of the x86 CPU; the 68000 or any RISC is much more suited).

It took me some time to understand it as well. For simplicity suppose you do perspective correction only on a line between points A and B. The principle is this:

- For PC you need the value of Z (depth, distance from camera) at each pixel you draw. Let’s only focus on how to get this correct value of Z.

- You’d probably think of taking the Z of the first point (A) and the Z of the second point (B) and by linear interpolation getting the intermediate Z values of the points you’re currently drawing on the screen. But you can’t do this, because the Z value from A to B isn’t linear on the screen because of the perspective! It would only be true in 2D space. In 3D, it’s a little “bent”, depending on how far away A and B are in the Z dimension.

- But, if you take 1/Z instead of Z, you find that that is linear! (It has to do with the fact that what perspective does is it divides with Z). So you compute 1/Z at A, then 1/Z at B and linearly interpolate between these values.

- At each point you’re drawing you have the interpolated 1/Z of your point. But you need Z, so at this time you do 1/(1/Z) to obtain it.

I can’t see any way to avoid this division.

Presumably this image is from the SDL version rather than the Pokitto version?

Ok, I see the problem.

The crux of the matter is that the two zs are effectively different entities.

It’s not z = 1 / (1 / z), it’s z = 1 / lerp(1 / A.z, 1 / B.z, t).

This is why I prefer to use code-like explanations to mathematican explanations.

Mathematical explanations tend to leave out important details because they don’t have the same constraints because they’re purely theoretical.

What is the possible range of Z?

Yes, this is all SDL so far.

Well mathematical explanation is an equation, which is the same thing as code. They use a similar equation on Wikipedia:

(EDIT: unfortunately the autoedit made it an equation for ants  )

)

Explanations in words are always just imperfect ambiguous human language.

32bit, too big for a table if that’s what you’re thinking about.

It isn’t the same as code.

Equations are declarative, not imperative.

They describe relationships, not processes.

The what, not the how.

(Not to mention the variable names are always impenetrably terse.)

Either way, I’d like some diagrams at least.

So are explanations in mathematical symbols.

No symbols have inherant meaning, the meaning is ascribed to them by humans.

That’s annoying.

I was indeed thinking of a lookup table.

So right in front of the camera is 0 and the vanishing point is 4294967295?

Or is this floats, or fixed points?

Otherwise I can only think to use a fast approximation somehow.

Not literally, but in terms of precision and accuracy of communicating ideas they are, provided they are valid. If someone doesn’t define a symbol, that’s as if you don’t declare a variable that you use. Behavior of the same code can also differ between platforms, e.g. ints can have different widths – if you provide code without specifying the platform, you can get ambiguous meaning too. Anyway, properly written equation or code with all the context should communicate the idea with absolute precision – at least as much absolute as possible in this universe, right?

It is universally agreed that math is the most precise thing ever.

Basically yes (there is a near plane > 0, the closest possible distance, and the int is signed, but after transformation to screen space we don’t want to have negative values). Anyway, now I think the problem has been solved, it’s no longer critical to get rid of this division.

My problem now is the code has gotten a bit messy

Looks very good! It would be interesting to see what is the performance in Pokitto.

It depends on the language.

Also good code shouldn’t depend on platform specific details and should abstract those details away where possible.

That’s why the fixed width types were introduced.

Modern code should avoid using the fundamental types.

It depends on what’s being communicated.

I often find that to simply get the most basic outline of an idea across,

words and pictures tend to be the most effective tools.

For things like data structures and geometry, diagrams are invaluable.

(The human brain is very visually oriented, as is the norm for many apes).

Code on the other hand is the most useful for demonstrating the implementation of the idea.

Maths is somewhere between the two, but its usefulness depends on the idea being expressed,

and it’s most effective when supplemented with diagrams.

For example, I’m always sending people ‘maths is fun’ articles because they make good use of diagrams and explanations.

Here’s some prime examples:

- https://www.mathsisfun.com/algebra/vectors.html

- https://www.mathsisfun.com/combinatorics/combinations-permutations.html

- https://www.mathsisfun.com/geometry/vertices-faces-edges.html

- https://www.mathsisfun.com/binary-number-system.html

Universally means all humans.

The set of everyone includes me, and I disagree, therefore the statement is false.

(Or at the very least hyperbole.)

Also I can’t read Czech, but only the third link actually points to a quoted sentence, so I think the others are telling me that I’m not allowed to read those pages for some reason.

I had a skim of the book that I actually could read and it’s full of that stuffy, clunky, overly formal language that makes mathematics so inaccessible.

Fair enough.

I like thinking about these problems though.

Even if the solution doesn’t come in handy today,

it might one day in the distant future.

For example, recently I solved a problem in someone else’s project by applying a technique I was using in one of my projects to solve a different but tangentially related problem.

I’d suggest scrapping the C-like code and opting for some C++-like code,

but I know what the answer would be. :P

It is very rare that I say this because usually graphics don’t sway me,

but that is some really impressive realism.

Actually, I am probably going to hate that game. I despise games where there is “go here” flashing above your target area / objective / person. This looks like a glorified rail shooter.

I prefer games like this. No explanations, no HUD’s, no hand holding, no second chances. Just hard and frustrating all the way.

The aesthetic is what puts me off.

I don’t like settings that are too urban or industrial.

I prefer natural landscapes. Wood, stone and water.

There’s a word for this. It’s ‘masochistic’. :P

The former game reminds me of Borderlands.

The latter reminds me more of Skyrim.

Originally I was just flicking through the 2077 video.

I just stopped to watch a small section and blimey that’s a lot of swearing.

I always have to laugh when people do that.

Swear too often and it looses its impact - it ends up just being white noise.

Have you played the Witness?  I loved that game, hugely because it was this, to the extreme.

I loved that game, hugely because it was this, to the extreme.

My brother just told me about Cyberpunk literally a few minutes ago, otherwise I’d have no idea what’s going on. I’ve long lost track of the modern games.